Yes, you might already know in yourself how many groups of friends you can categorize. Friends in gaming, friends in developer groups, and so on...

but do you know how big is your network?

That's my realization when I came to think that I might have too many friends, but I want to make sure that my hypothesis is correct

So, my objective is to make a web scrapper bot to scrape all my friends' lists in my profile. Then to understand the relation of each friend to the other, I also have to scrape the list of mutual friends of each friend as well.

Part 1: Scraping data

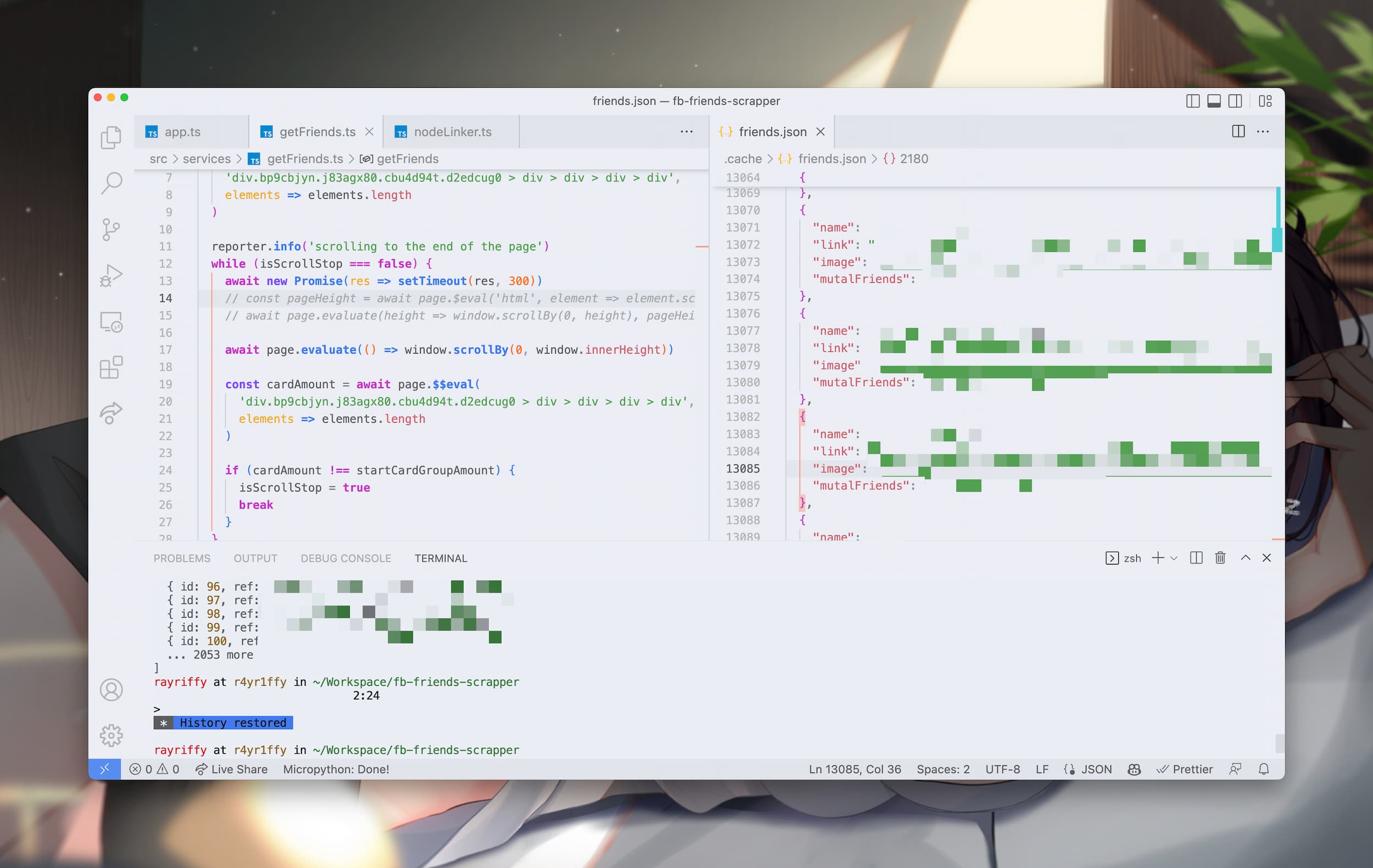

So, scraping data is a very big challenge so I thought. I think Facebook might implement some lockouts like invisible ReCaptcha to prevent bots rolling around their platform but no, you can easily implement Puppeteer bot to automatically handle authentication, saving credentials for later use, and scraping your list of friends very easily

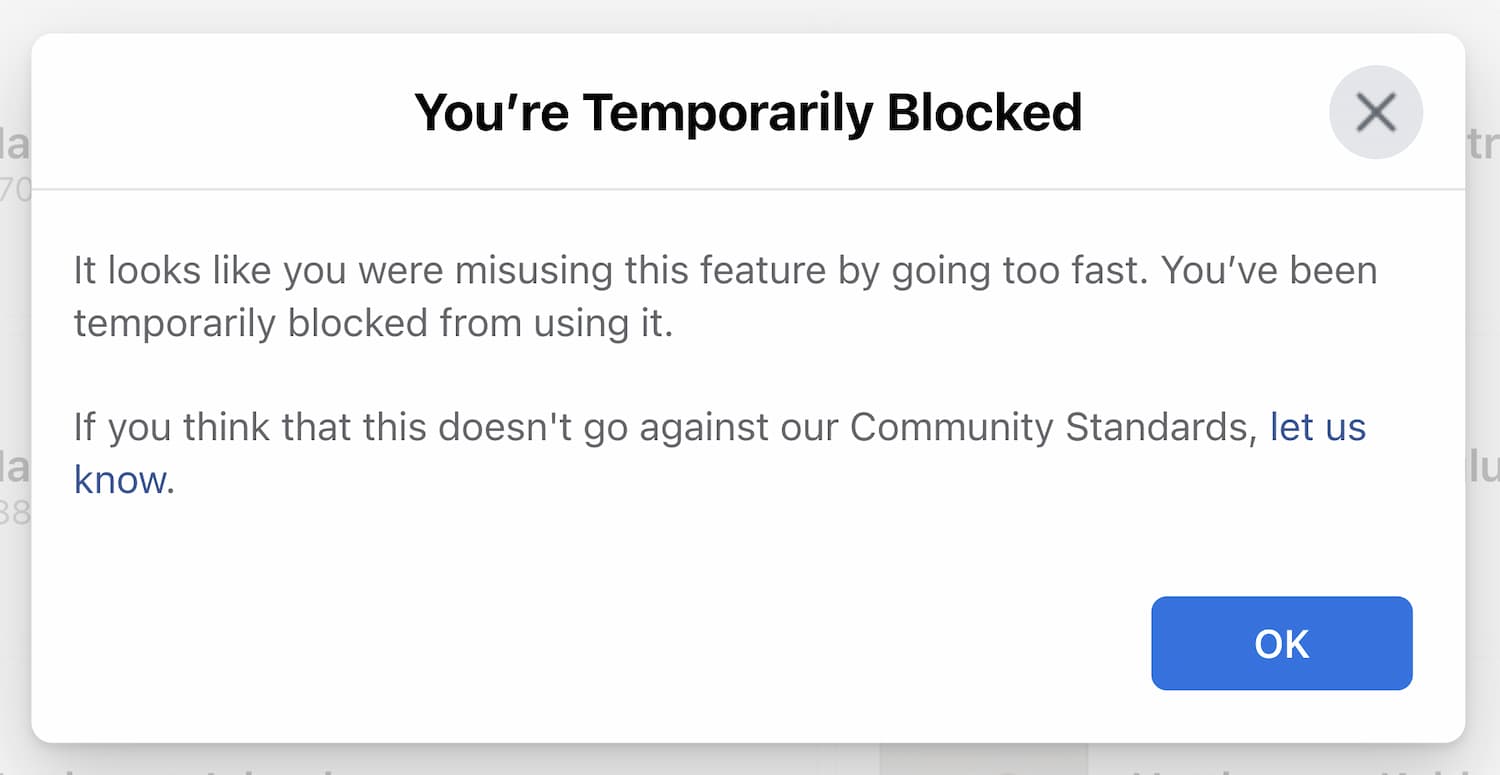

But there's a catch. Every day there's a certain limit on how many "Mutual friends" pages you can scrape. When you're about to scrape 50th friends in one CLI session, you will be temporarily locked out from going too fast.

So by calculation, I have ~2400 friends and I could only scrape 50 friends a day it would take 48 days to complete. So, take your time...unless you have a VPN

I found out that this limit is only being enforced per IP address only, that's means I can just use VPN to hop into another IP address to continue my process. I know this process still consumes a lot of time but at least I already solved and automated most of the manual work already.

Within a few days, everything has been scraped. We can move on to the next fun part

Part 2: Process the data

We already have the data but maybe too much, now it's a matter of how to process that into a usable dataset.

To answer that, we have to look into our objective. My objective to visualize it as a network, so that's would involve linking each datapoint together.

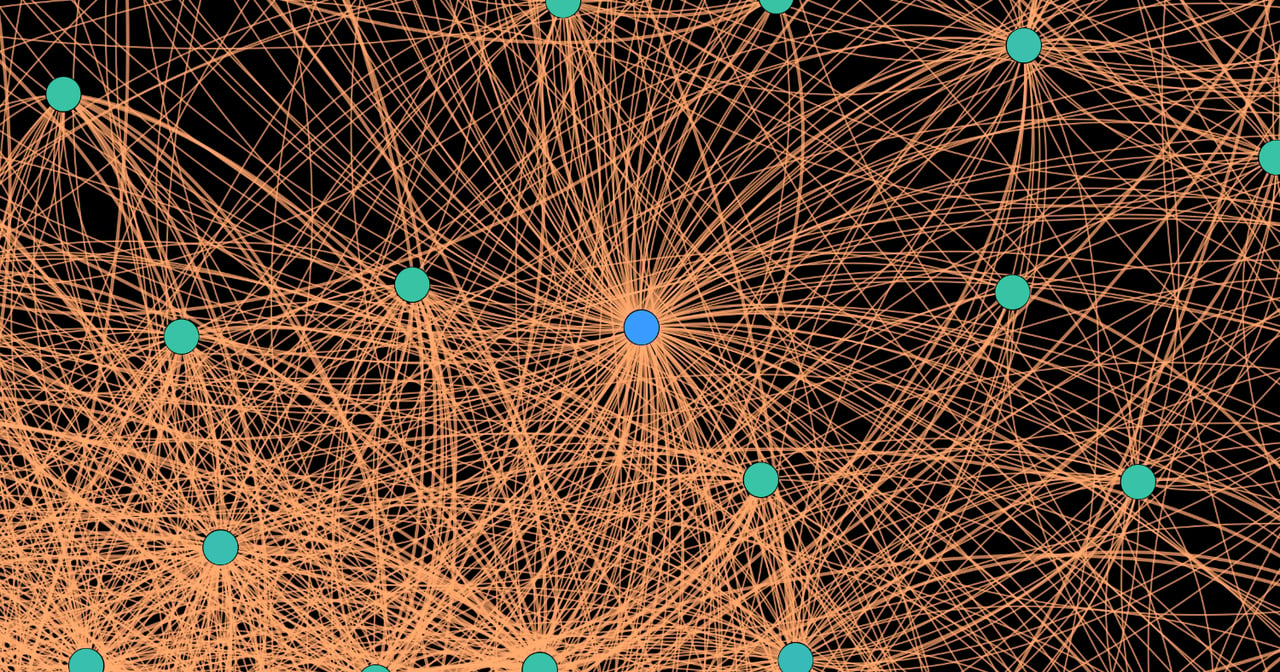

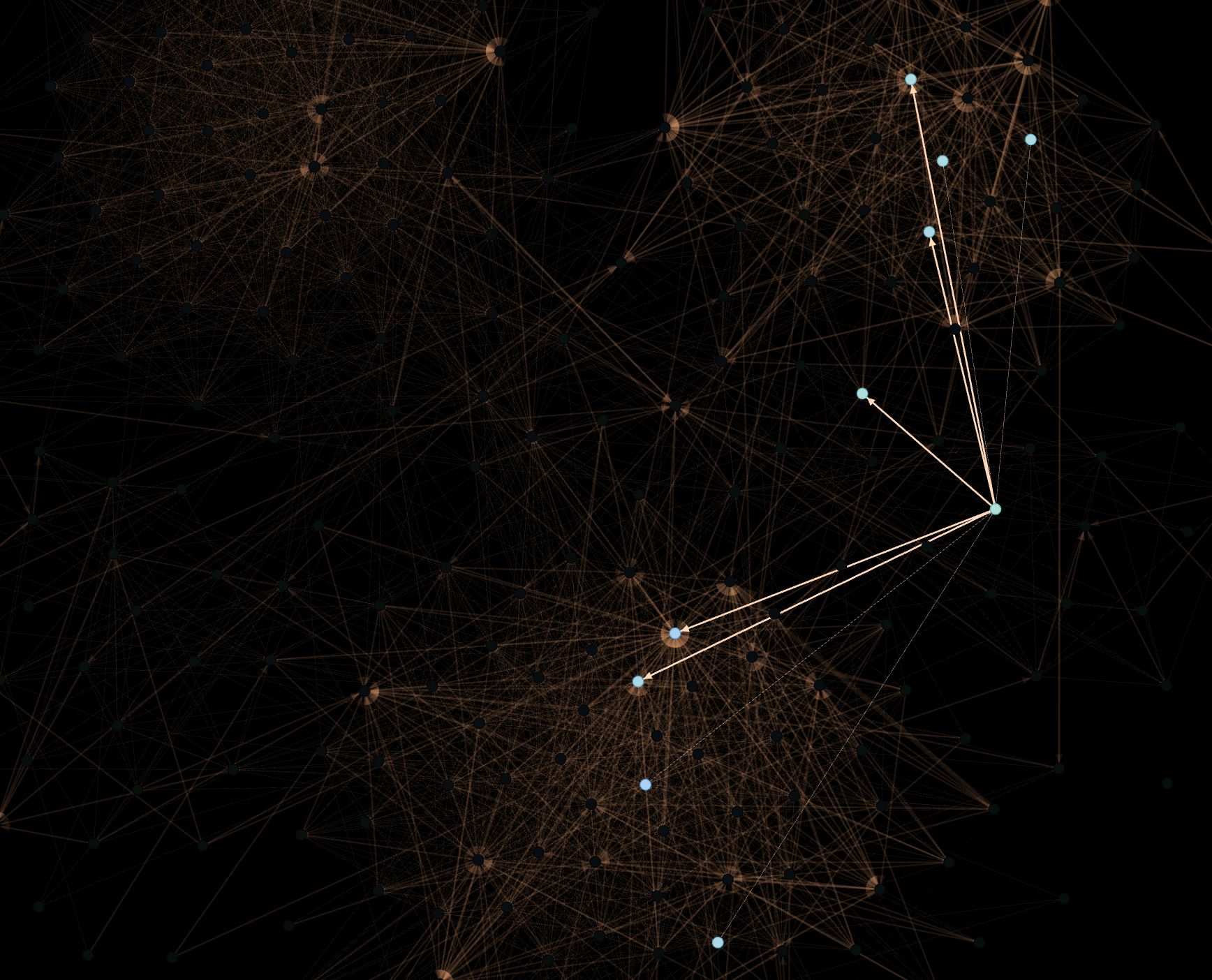

Each friend can be defined as a node, and the relation of mutual friends to others in my friends' list can be defined as edges

So, my script scrapes friend's data as JSON and I have to transform them into a unified format as much as possible to have a lot of software of choices to visualize the data. That's why I transform them into CSV

nodes.csv

| id | label |

| -- | ---------- |

| 0 | Myself. |

| 1 | Friend 1 |

| 2 | Friend 2. |

| .. | ... |

edges.csv

| from | to |

| ---- | -- |

| 1 | 2 |

| 0 | 1 |

| ... | .. |

Now, it's time to visualize!

Part 3: Guessing how the software works

There're many visualization software and libraries to use in the open-source world. My first approach is trying to visualize this data with Vis.js the results are very poor. With network growth of only 5% (~128 nodes, ~6000 edges) the browser is already struggling to keep rendering graph at a constant rate leading to main-thread blocking and crashing the browser.

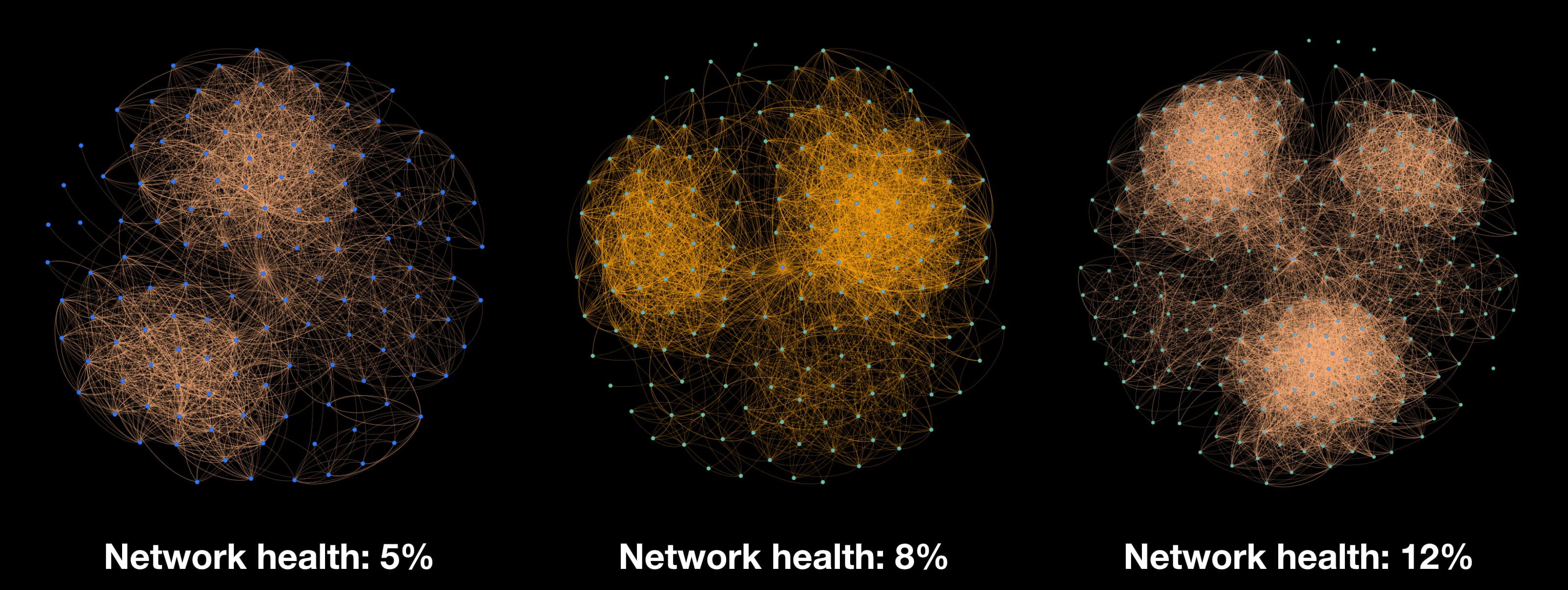

So I try the software approach instead, there's a very good library called Gephi that delivers great perforce regardless of edge size. So, I decided to use this instead. Moreover, Gephi can also label nodes with dynamic colors based on the quality of each node as well, leading to a more informative graph network. And they're already many algorithms to draw the layout of the graph as well. Here're the results

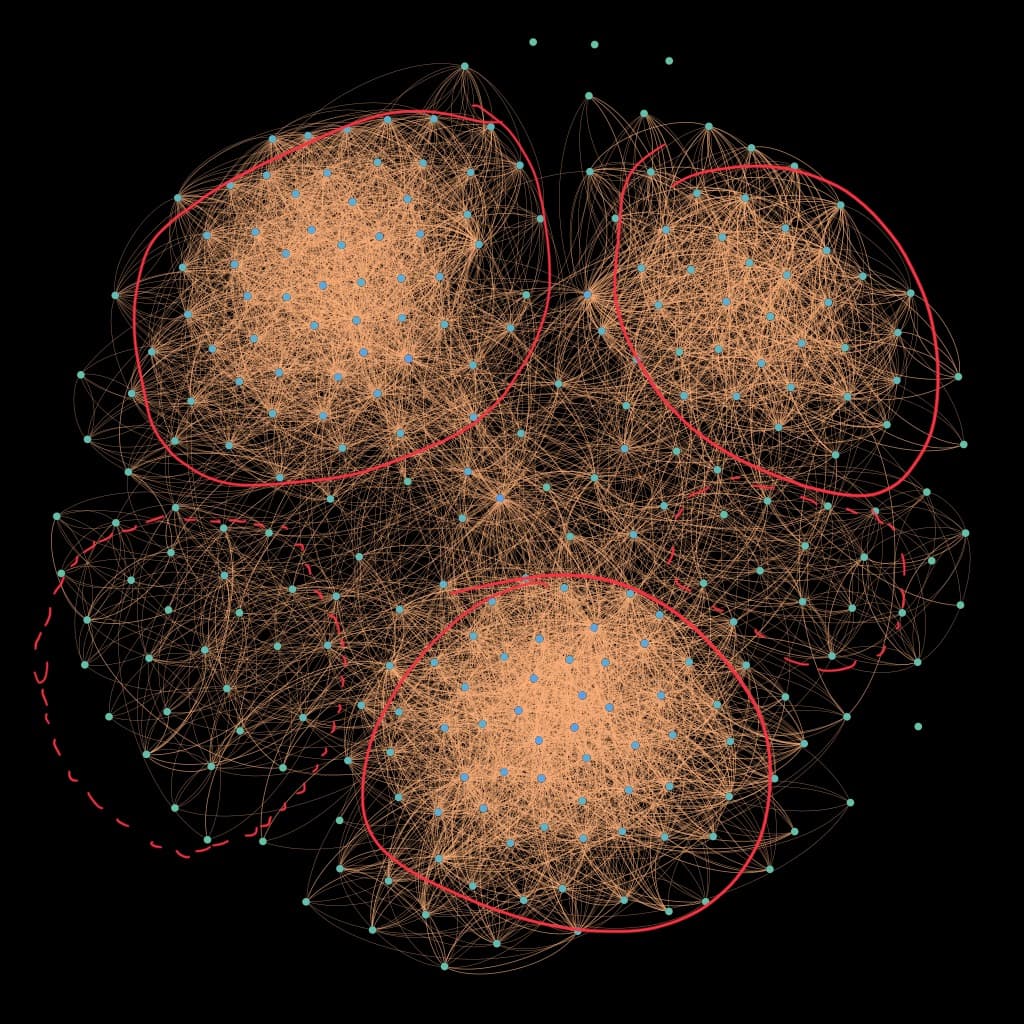

As you can see, the larger the network grows more groups start to appear. If you look at 12% network growth, you will see that there're 3 clear distinct groups in the network. This could represent a group of my friends in the developer community, game community, or academics (represented by a solid line stroke in the image below). Also, 2 new groups developing as new distinct groups as well. With a bit more time to scrape the data to let the network fully grow, it's possible to classify those groups (represented by a dotted line in the image below) and there might be more groups emerging as well.

You might be able to find some of your friends in the middle of the cluster as well. This could represent friends who help bridge the connection between groups with you.

This is one of many quick projects that I would like to try more. Also, I would not be going to publish this source code to the public repository since there're too dangerous for social engineering attacks. If there's something that interesting to share I will update the blog as well. So, stay tuned for more.