As I'm working as a Platform Engineer, other than a primary role to keep the codebase of the company in check and optimize the overall productivity of developers. We also find ways to squeeze every penny out of monthly billing in the most ridiculous way as well, no matter what the cost.

Prologue: Current configuration

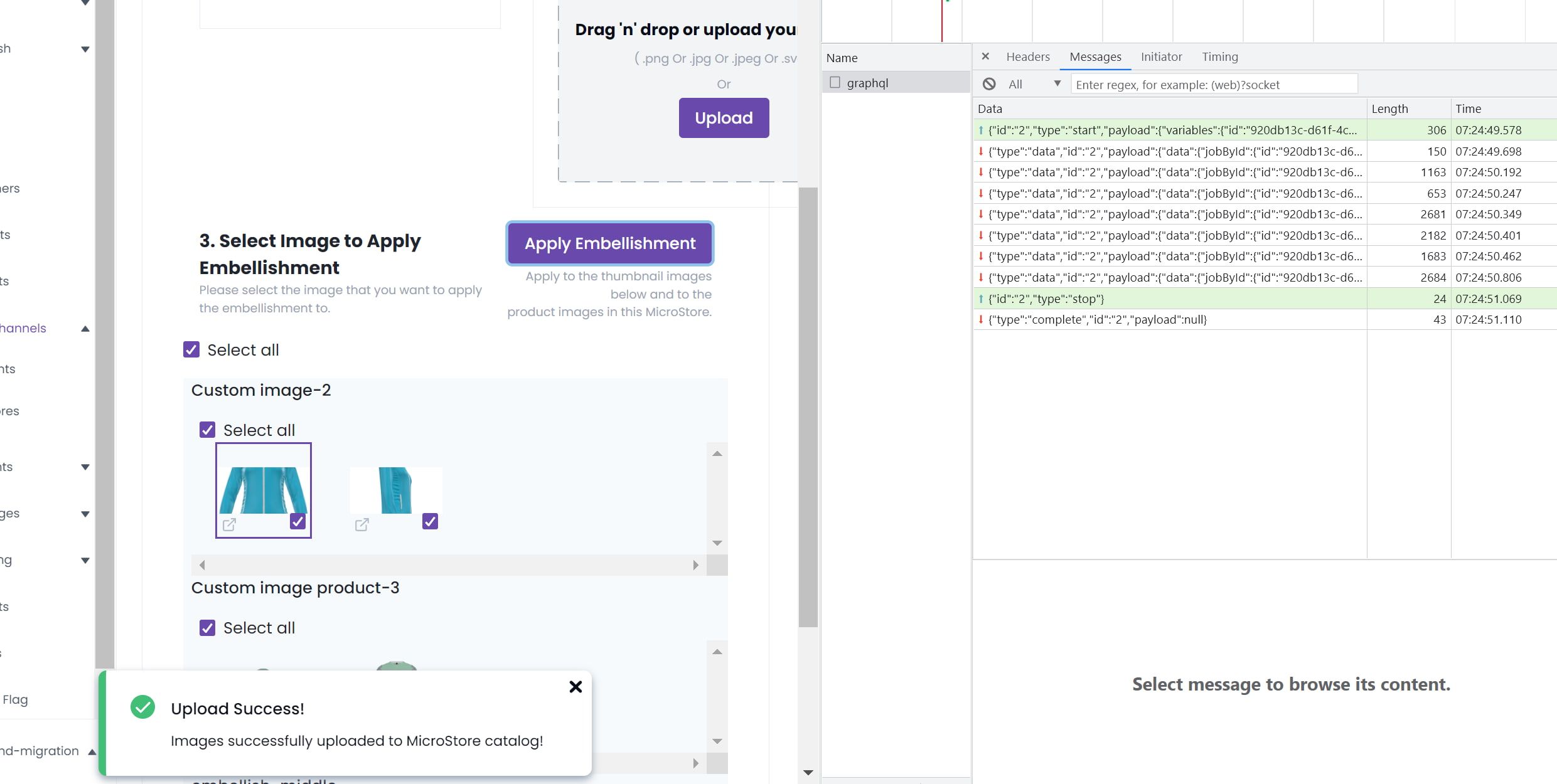

We have one design service where we're rendering a heavy image on the server side via the Lambda function.

At the current state, we're using x86_64 Lambda function runtime since that's the only option at the time.

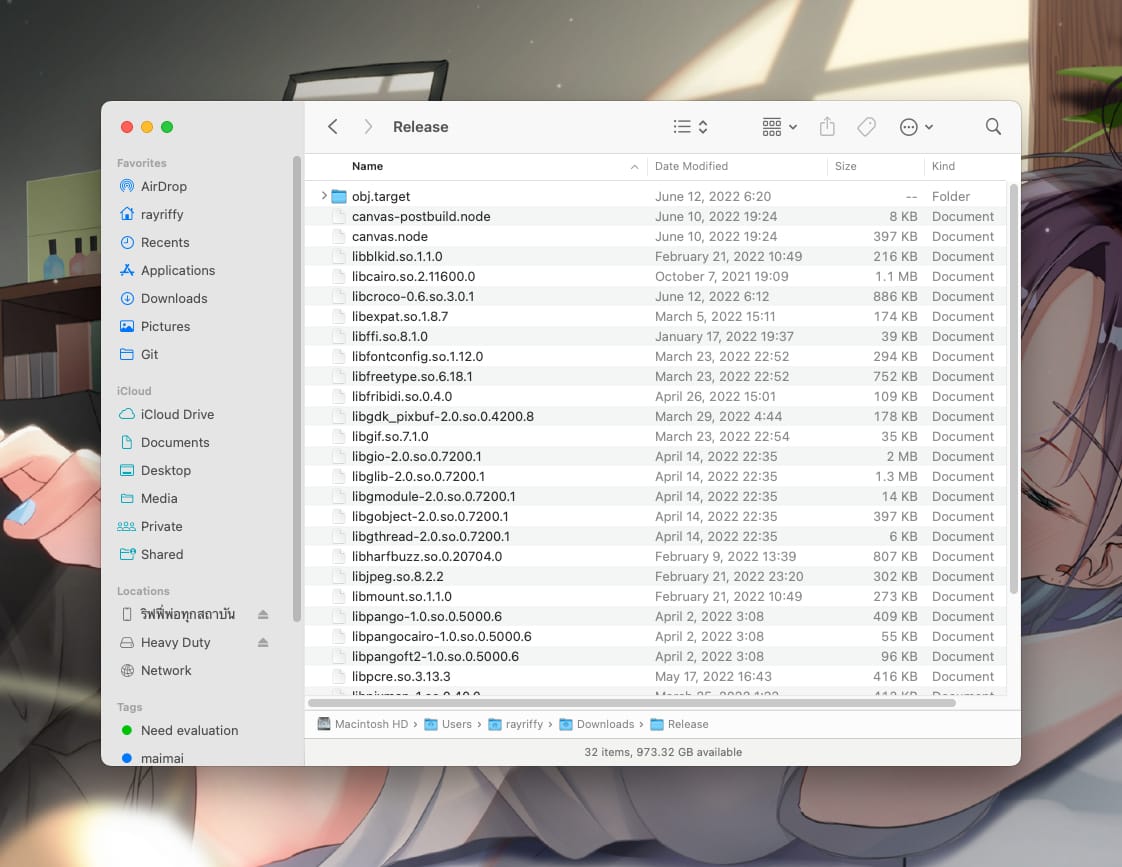

Before, we deploy node-canvas by directly injecting libraries that are required by canvas.node into Relase/ directory deep inside node_modules and uploading them directly to the code source.

This is working great at a time until it doesn't. Fast forward to 2021, Amazon announced Lambda functions powered by their Graviton2 processor. This is a new hype for the engineering team since it could save us 34% in billing, possibility of smaller package size, and better performance.

We couldn't begin the move until last few months ago after we upgraded the minimum Node version for our backend to Node 16. At this point, almost all of the services are now moved to arm64 runtime except for a few services that use native binaries, or libraries. And node-canvas is one of the examples.

Chapter 1: The YOLO

So, the first method that I can think of is obvious, creating arm64 version of canvas.node and replacing all native libraries with arm64 version. Then just upload to the Lambda function then it should be easily done.

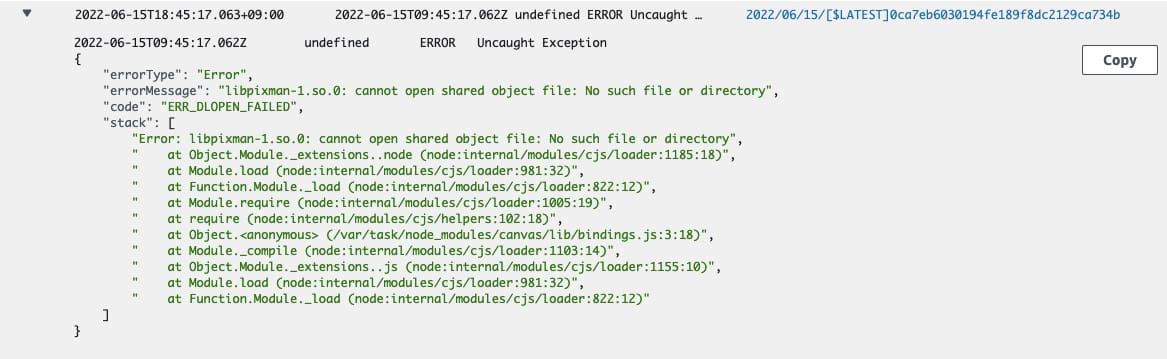

Man, how wrong I was. Turns out there's a change in the internal operating system of the Lambda runtime that we cannot use this trick anymore, so when the function is executed canvas.node cannot call any library at all

Chapter 2: The layer

So the next obvious step is to create this complicated stuff as a Lambda layer instead. It will provide us with a plug-and-play solution so we can just reuse the layer with other services that going to use canvas later on in the future.

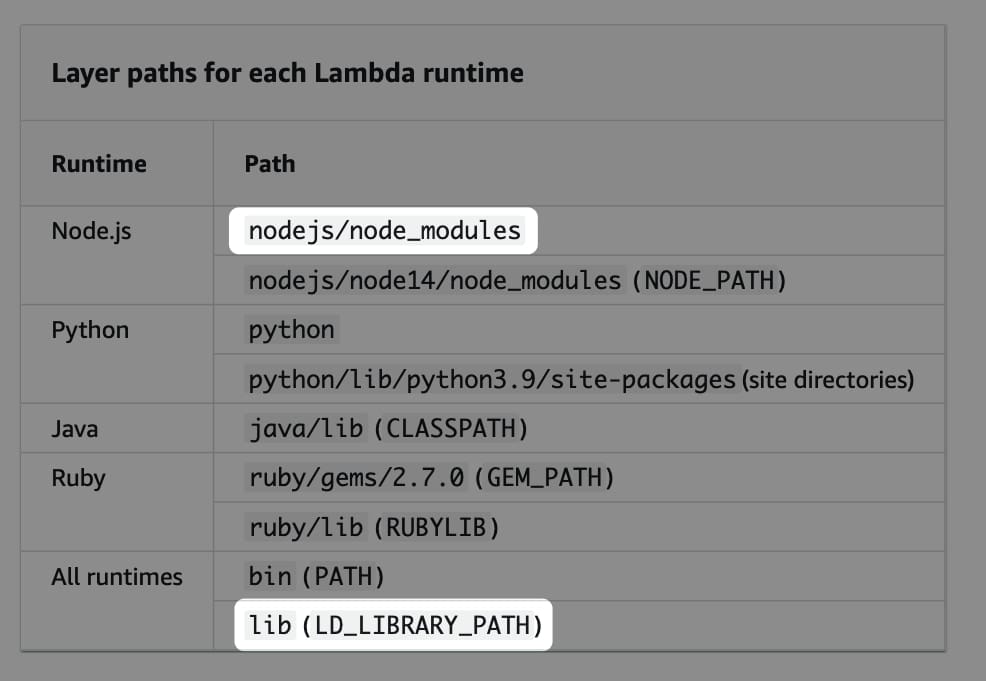

The new plan is simple, create a layer with canvas and fabric pre-included. Then copy all required libraries to the correct directory structure (image below). Then zip it, upload it, and test it.

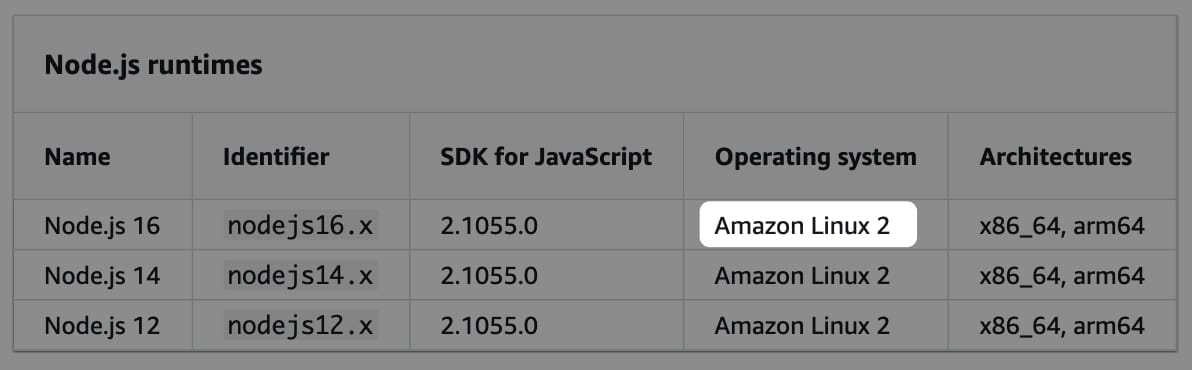

At first, I have to know the Linux distro that I have to build with. I asked a senior engineer on the backend side about which kind of operating system Lambda uses, and the answer is the Red Hat distro. So, I build all of this inside public.ecr.aws/docker/library/fedora:35 Docker image which makes sense to me.

Chapter 3: The realization

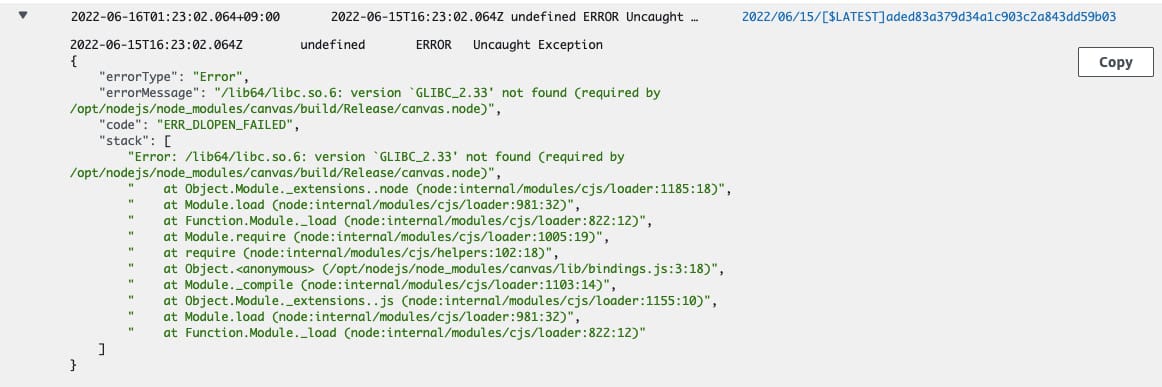

So, the layer is built and uploaded. It's time to test and.....it broke.

version 'GLIBC_2.33' not found means that I copy the native library from Fedora 35 image which has GLIBC of 2.33, but Lambda runtime does not have that version.

So what to do?? It could be either a newer or older version of glibc how am I supposed to know that.

Well, I don't. Turns out I didn't read the documentation carefully, and later realized that Lambda runtime is based on Amazon Linux 2. So if I build the layer again with the same operating system then it should be ready to roll!

And sure enough....IT WORKS!!!!!

Epilogue: The future

There might be a chance that you want to try also using node-canvas in Lambda as well, and with this approach, you can create your own Lambda layer with the version of node-canvas, and fabric of your own choice!

Check out GitHub repository that I published, it includes all of the steps and scripts that would able to help you to create your own lambda layer.

So...what's next? Now almost all of our services are now running at arm64 except for services that use puppeteer because there're no builds for ARM yet. I tried to build chrome manually by source with the same method but it proves to be too hard. So those services might be migrated to container service instead.

And that's all a wrap for this article. This would not be the first scope of what we did to reduce billing costs though, stay tuned for more.